PCI Express 2.0: Scalable Interconnect Technology, TNG

by Kris Boughton on January 5, 2008 2:00 AM EST- Posted in

- CPUs

PCI Express Backwards Compatibility

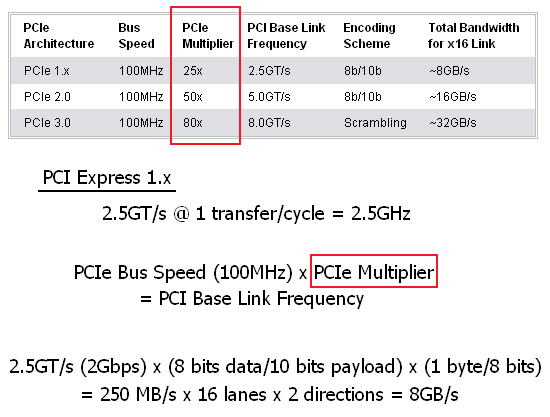

PCI Express is a layered protocol composed of a three distinct partitions: physical (PHY), data link layer (DLL), and transaction layer (TL). This modular approach is what allowed the fundamental change in the physical layer data rate from 2.5GT/s to 5.0GT/s to go unnoticed by the upper layers. The PCI-E bus speed remains unchanged at 100MHz; the only feature that changed is the rate at which data is transferred across the board. This suggests that there is significant signal manipulation required on both the transmit and receive ends of the pipe before data is available for use. In the end, the change is akin to selecting a higher multiplier for a CPU: although the processor operates at an increased frequency, it continues to communicate with the interface component at the same rate. This concept will help us to introduce the concept of a "PCI-E Multiplier." This number is static and cannot be changed (except by the motherboard, which occurs during the training process). PCI Express 3.0 should further increase the PCI-E Multiplier to 80x, which will bring the base link frequency very near the maximum theoretical switching rate for copper (~10Gbps).

As we mentioned before, installing a PCI Express 1.x card in a PCI Express 2.0 compliant slot will result in PCI Express 1.x speeds. The same goes for installing a PCI Express 2.0 card in a PCI Express 1.x compliant slot. In every case, the system will operate at the lowest common speed with the understanding that all PCI-E 2.0 devices must be engineered with the ability to run at legacy PCI-E 1.x speeds.

Unfortunately, there have been some reports of new PCI-E 2.0 graphics cards refusing to POST (Power On Self-Test) in motherboards containing chipsets without PCI Express 2.0 support. We can assure you this is not by design as the PCI-SIG PCI Express 2.0 Specification is very clear on the issue - PCI Express 2.0 is backward-compatible with PCI Express 1.x in every case. With this in mind, and knowing that some PCI Express 1.x motherboards have no problems running the new graphics cards while others do, we have no choice but to blame the board in the case of no video. Unfortunately, this makes it difficult to determine if problems await during the upgrade process; you must first consult with others that use the same video card/motherboard combination. Thankfully the cases of incompatibility seem to be few and far between.

21 Comments

View All Comments

kjboughton - Sunday, January 6, 2008 - link

Are you sure this isn't fiber or optical? Any supporting information you can provide would be great.Hulk - Saturday, January 5, 2008 - link

First of all great article. Great writing. You should be proud of that article.I see that currently the Southbridge can transmit data to the Northbridge at 2GB/sec max. In real world situations about how much bandwidth would the Southbridge require assuming a light, medium, and heavy loading situation?

kjboughton - Monday, January 7, 2008 - link

I can help you with some of the base information needed to calculate this yourself (since every system is different based on attached peripherals as well as their type) and we'll leave the rest to you as an exercise.For example, a 1Gbps Ethernet connection to the ICH would have a maximum theoretical sustained data transfer rate of 125MB/s (1Gbps x 1 byte/8 bits). A single SATA 3.0Gbps drive would be limited by the interface to three times this number, or about 375MB/s (although the disk to bus/cache transfer rate is much less, somewhere on the order of 120-140MB/s sustained) - but nevertheless, burst read speeds could easily saturate the bus in one direction (1Gbps). Then there's USB devices, possibly a sound card or other onboard solution...going through the numbers, adding up the maximum possible bandwidth for all your attached devices you should be able to get an idea for what would be "light, medium and heavy" loading for your system. Again, this is something that varies from system to system. Hope this helps.

LTG - Saturday, January 5, 2008 - link

Excellent article, good tech level.Would you believe "simple ecards" benefit from PCI-E 2.0 right now?

At most sites when you send an ecard it just e-mails a link to a flash animation to someone.

However when you send an ecard at the site below, it's rendering and compositing custom photos and messages into a 3d scene on the fly for each card sent.

Because this is a web site all of this runs on the server side for many users at once.

PCI-E 3.0 will be welcome :).

http://www.hdgreetings.com/preview.aspx?name=count...">http://www.hdgreetings.com/preview.aspx?name=count...

or

www.hdgreetings.com (sorry, link buttons not working)

JarredWalton - Saturday, January 5, 2008 - link

I don't know that e-cards would really benefit much - especially right now. The FSB and memory bandwidth aren't much more than what an x16 PCI-E 2.0 slot can provide in one direction (8GB/s). I would imagine memory capacity and the storage subsystem - not to mention network bandwidth - are larger factors than the PCI-E bus.Are you affiliated with that site at all? If so, I'd be very interested to see a performance comparison with a single 8800 GTX vs. an 8800 GT on a PCI-E 2.0 capable motherboard. The 8800 GTX even has a memory and performance advantage, but if as you say the bottleneck is the PCI-E bus, it should still see a performance increase from the 8800 GT.

LTG - Sunday, January 6, 2008 - link

Hi Jared, yes I'm a developer on the site - (pls don't think of my post as spam, i've been a reader at AT forever and it just seemed relevant :)You could be right, we are just now starting to test pci2.0 so the benchmark you mention will definitely shed some light.

The network is not a bottleneck because cards are rendered and compressed on a given server node.

The disk IO is a 6 drive RAID0 array (no data is at risk because the nodes just render jobs) with the Segate 7200.11 drives max out at 100MB/sec transfer rate each, which is less than 600MB/sec total, however I have "heard" that the effective PCI-E video card bandwidth is much less than the theoretical limit.

I wish there were a utility to easily measure PCI-E bandwidth but currently I only know of indirect experiments as you mention.

Thanks again for the nice article.

PizzaPops - Saturday, January 5, 2008 - link

I can't help but be amazed by the speed at which hardware is improving. I remember when we were stuck with just PCI and AGP for what seemed like forever. Now the speeds are getting ridiculous. Can't wait to see what the future has in store.Very informative article and not too difficult for the average person to understand either. Now I know why my X38 gets so hot.

Spoelie - Saturday, January 5, 2008 - link

Why does the 790FX stays so cool then?Besides, there hasn't been a review of that one yet on AT.

Gary Key - Saturday, January 5, 2008 - link

The 790FX does not have the memory controller on-board among other items, so the additional power required for PCI-E 2.0 is minimal at best as are resulting thermal increases. AMD also took a very elegant approach on the 790FX in regards to PCI-E 2.0 (they had time to ensure proper integration, Intel's is fine, just they had a lot to cram into the chipset this time around ;) ) that we will cover shortly.NVIDIA's current approach on the 780i is to use a bridge chipset that is creating a few problems for us right now when overclocking both the bus and video card. We will have a complete 790FX roundup the week of the 14th along with a "how to" guide on getting the most out of Phenom on these boards.

Comdrpopnfresh - Monday, January 7, 2008 - link

So by bridge chip, some intermediate chip slows things? Or creates asymmetric latencies leading to unbalanced clocks (like initial SATA drives implementing connections and features like NCQ natively on PATA with a cross-over to SATA)?If so, I read an article dealing with a similiar problem with raid-spanning of SSDs somewhere... have to dig up the link...

The problem began with one dive on the Intel ICH..9 (I understand there is a workaround now). So the tester switched to an add-in discrete raid-handling card. When they began adding more and more drives (believe they went 1-2-3-4), these mucho-expensive raid cards were zapping throughput one after another (with successively higher prices of course) because the companies shaved on the onboard processing power because before these SSDs, the throughput on a RAID spanning standard HDDs just wasn't nearly as great. When they got to four drives, and something like a > $900 add-in card they stopped- one hell of an expensive review!